NVIDIA has far more software engineers than many of their competitors have employees, and these engineers continue to squeeze more performance out of each generation of chips. Once again, NVIDIA wins in performance, but faces increasing competition for power-constrained environments such as the Edge Data Center and the Embedded Edge, where Qualcomm and SiMa.ai had winning results. And remember the performance gains I mentioned above for Qualcomm? I’d expect SiMa to continually increase their performance with each release of their TVM back end software. In the MLPerf 3.0 round, the company bested NVIDIA Jetson power efficiency for image classification by 47%, not bad for a first round. So SiMa.ai built the MLSoC chip from the ground up as an embedded platform. Scaling down a data center AI chip just won’t cut it. To reach the power customers demand, one has to design an edge chip from the ground up. Some examples of AI at the embedded edge include voice assistants that can understand and respond to user commands, sensors that can detect anomalies and trigger alerts, and autonomous vehicles that can recognize and respond to their surroundings in real-time.

SiMa.ai shared this with us to show the broad range of image processing they handle at 15 watts compared to NVIDIA Xavier AGX and Orin. The Open Division of MLPerf allow for all types of tricks and changes, so long as the accuracy is reached. The 3.0 results include new benchmarks in the open division, including a block pruned BERT Large over a network that delivered 100% accuracy and 2.8x better performance than its closed division submission. As a testament to the importance of software optimizations over time, Qualcomm has demonstrated a 75% performance and a 52% power efficiency improvement since they began this journey 3 years ago. The company’s Cloud AI100 was submitted for over 25 server platforms with 320 results, all of which are the best in the industry in terms of power efficiency, latency, and throughput in its class. “We’ve never come across Qualcomm at any of our prospective companies,” said Gopal Hegde, VP of Products at SiMa.ai. Note that these two company’s don’t really compete, in spite of the common “Edge” terminology. Power matters for inference at the edge, both the edge data center (Qualcomm) and in the embedded edge market, in this case SiMa.ai. While NVIDIA GPUs delivers industry leading performance, that performance lead comes at a cost besides purchase cost: power consumption. The amazing part of this story is that the optimization process is automatic! And they have the customer list to prove that it actually works, as well as hardware partners including NVIDIA, Intel, AWS, and many system vendors such as HPE. But it should shine for large model inference like ChatGPT!ĭeci delivered fantastic model optimizations for NVIDIA A100 H100 GPUs and can be applied to virtually any hardware architecture. Missing is the H100 NVL announced 2 weeks ago at GTC just didn’t get it ready in time.

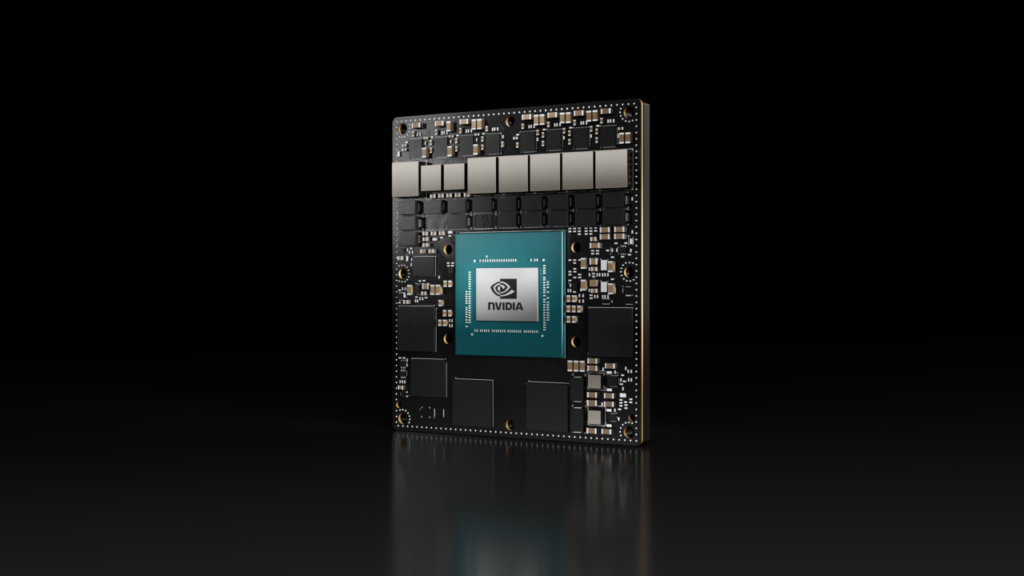

NVIDIA and others ran the benchmarks on the latest from NVIDIA: the H100, the L4, and the Jetson AGX Orin. And NVIDIA H100 brandishes a Transformer Engine which dominated BERT in these the MLPerf 3.0 benchmarks. While that implies a six month wait, the current BERT benchmark is still very useful to assess platforms for the smaller large language models that are typically extracted from models such as GPT-3. While there are currently no benchmarks for (very) large language models such as GPT or Google LaMDA, MLCommons executive director David Kanter said the community is working on a new benchmark that will test inference, and presumably training, performance and power consumption of 100B-parameter-class models that have recently created the i-Phone moment for AI. Over 5300 results were submitted, with 2400 power results. Six new companies submitted benchmark runs, notably SiMa.ai and Neuchips. Many edge AI apps only use one or two AI models such as image classification or detection. NVIDIA is quick to point out that many customers need a versatile AI platform, although that is primarily true in a data center setting. NVIDIA and its partners ran and submitted benchmarks across the entire suite of MLPerf 3.0: image classification, object detection, recommendation, speech recognition, NLP (large language models), and 3D segmentation. And once again no one is able to best NVIDIA on a head-to-head performance basis.

Once every 6 months, MLCommons releases another round of benchmarks for AI inference processing, the job of running neural networks in production. Ok, this story is long but I think its worth telling. Qualcomm and SiMa.ai win in Edge Data Center and Embedded Edge respectively, while Neuchips wins in data center recommendations for power.īig News! There is a potential solution on the horizon to vastly broaden the field of benchmark submission we cover at the end: the Collective Knowledge Playground.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed